(Peer-Reviewed) Universal Adversarial Examples and Perturbations for Quantum Classifiers

Weiyuan Gong ¹, Dong-Ling Deng 邓东灵 ¹ ²

¹ Center for Quantum Information, IIIS, Tsinghua University, Beijing 100084, People's Republic of China 清华大学 交叉信息研究院 量子信息中心

² Shanghai Qi Zhi Institute, 41th Floor, AI Tower, No. 701 Yunjin Road, Xuhui District, Shanghai 200232, China 上海期智研究院

National Science Review, 2021-07-22

Abstract

Quantum machine learning explores the interplay between machine learning and quantum physics, which may lead to unprecedented perspectives for both fields. In fact, recent works have shown strong evidences that quantum computers could outperform classical computers in solving certain notable machine learning tasks. Yet, quantum learning systems may also suffer from the vulnerability problem: adding a tiny carefully-crafted perturbation to the legitimate input data would cause the systems to make incorrect predictions at a notably high confidence level.

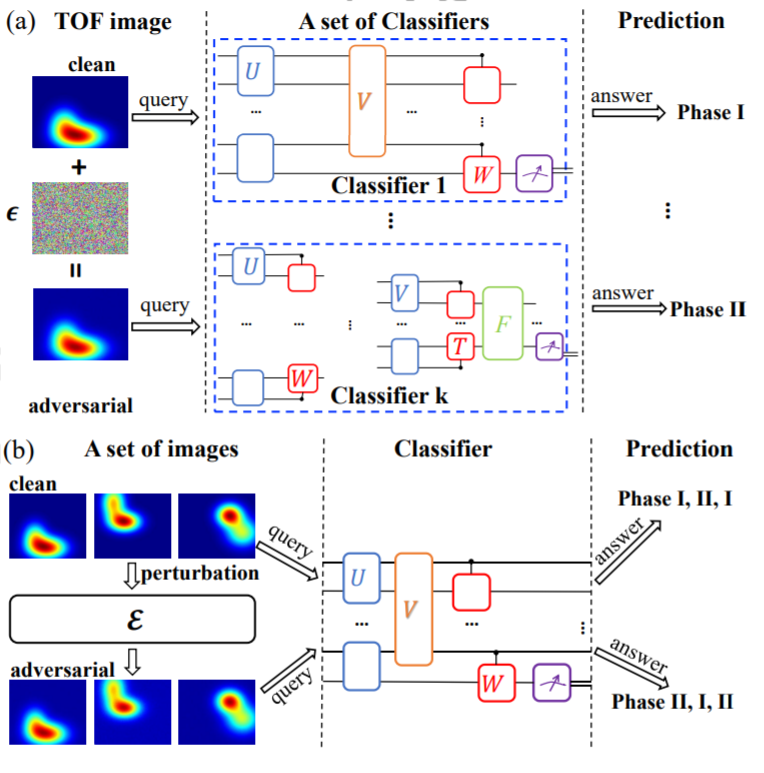

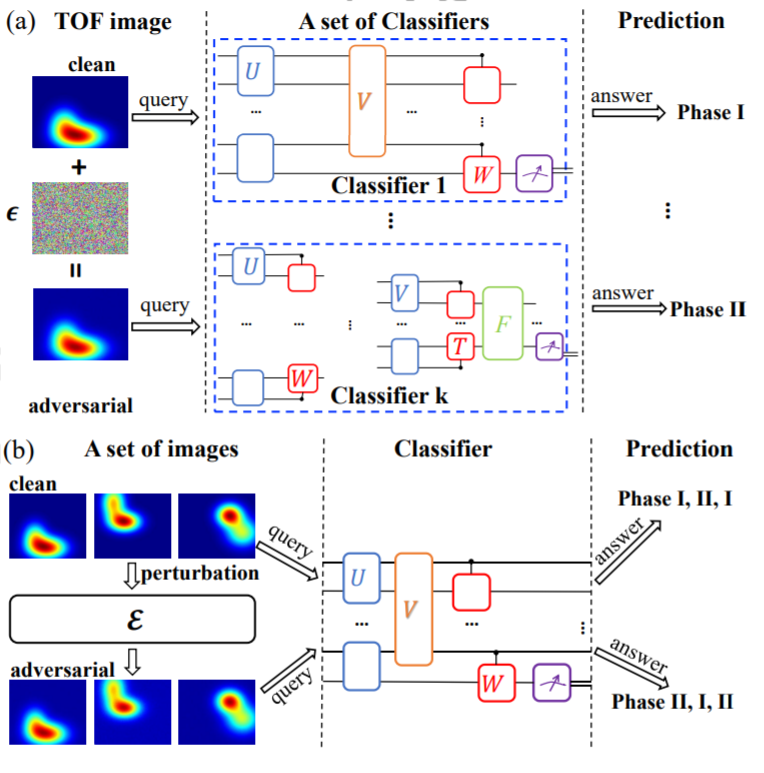

In this paper, we study the universality of adversarial examples and perturbations for quantum classifiers. Through concrete examples involving classifications of real-life images and quantum phases of matter, we show that there exist universal adversarial examples that can fool a set of different quantum classifiers. We prove that for a set of k classifiers with each receiving input data of n qubits, an O(ln k/2ⁿ) increase of the perturbation strength is enough to ensure a moderate universal adversarial risk.

In addition, for a given quantum classifier we show that there exist universal adversarial perturbations, which can be added to different legitimate samples and make them to be adversarial examples for the classifier.

Our results reveal the universality perspective of adversarial attacks for quantum machine learning systems, which would be crucial for practical applications of both near-term and future quantum technologies in solving machine learning problems.

High-speed and large-capacity visible light communication for 6G: advances and perspectives

Nan Chi, Zhilan Lu, Fujie Li, Haoyu Zhang, Yunkai Wang, Xinyi Liu, Zhiwu Chen, Zhe Feng, Zhuoran Hu, Zhixue He, Ziwei Li, Chao Shen, Junwen Zhang

Opto-Electronic Technology

2026-03-20

Holotomography-driven learning unlocks in-silico staining of single cells in flow cytometry by avoiding fluorescence co-registration

Daniele Pirone, Giusy Giugliano, Michela Schiavo, Annalaura Montella, Martina Mugnano, Vincenza Cerbone, Maddalena Raia, Giulia Scalia Ivana Kurelac, Diego Luis Medina, Lisa Miccio Mario Capasso, Achille Iolascon, Pasquale Memmolo, Pietro Ferraro

Opto-Electronic Science

2026-02-25

A hybrid integrated high-precision tunable semiconductor laser

Yiran Zhu, Botao Fu, Zhiwei Fang, Qiyue Hu, Jianping Yu, Yunpeng Song, Yu Ma, Min Wang, Kunpeng Jia, Zhenda Xie, Ya Cheng

Opto-Electronic Advances

2026-02-12

Millisecond-level electrically switchable metalens for adaptive rotational depth mapping and diffraction-limited imaging

Yeseul Kim, Jihae Lee, Won-Sik Kim, Hyeonsu Heo, Dongmin Jeon, Beomha Yang, Xiaotong Li, Harit Keawmuang, Shiqi Hu, Young-Ki Kim, Trevon Badloe, Junsuk Rho

Opto-Electronic Advances

2026-02-12