(Preprint) CLSEBERT: Contrastive Learning for Syntax Enhanced Code Pre-Trained Model

Xin Wang ¹, Yasheng Wang ², Pingyi Zhou ², Meng Xiao ², Yadao Wang ², Li Li ³, Xiao Liu ⁴, Hao Wu 武浩 ⁵, Jin Liu 刘进 ¹, Xin Jiang ²

¹ School of Computer Science, Wuhan University 武汉大学 计算机学院

² Noah's Ark Lab, Huawei 华为 诺亚方舟实验室

³ Faculty of Information Technology, Monash University

⁴ School of Information Technology, Deakin University

⁵ School of Information Science and Engineering, Yunnan University 云南大学 信息学院

arXiv, 2021-08-10

Abstract

Pre-trained models for programming languages have proven their significant values in various code-related tasks, such as code search, code clone detection, and code translation. Currently, most pre-trained models treat a code snippet as a sequence of tokens or only focus on the data flow between code identifiers.

However, rich code syntax and hierarchy are ignored which can provide important structure information and semantic rules of codes to help enhance code representations. In addition, although the BERT-based code pre-trained models achieve high performance on many downstream tasks, the native derived sequence representations of BERT are proven to be of low-quality, it performs poorly on code matching and similarity tasks.

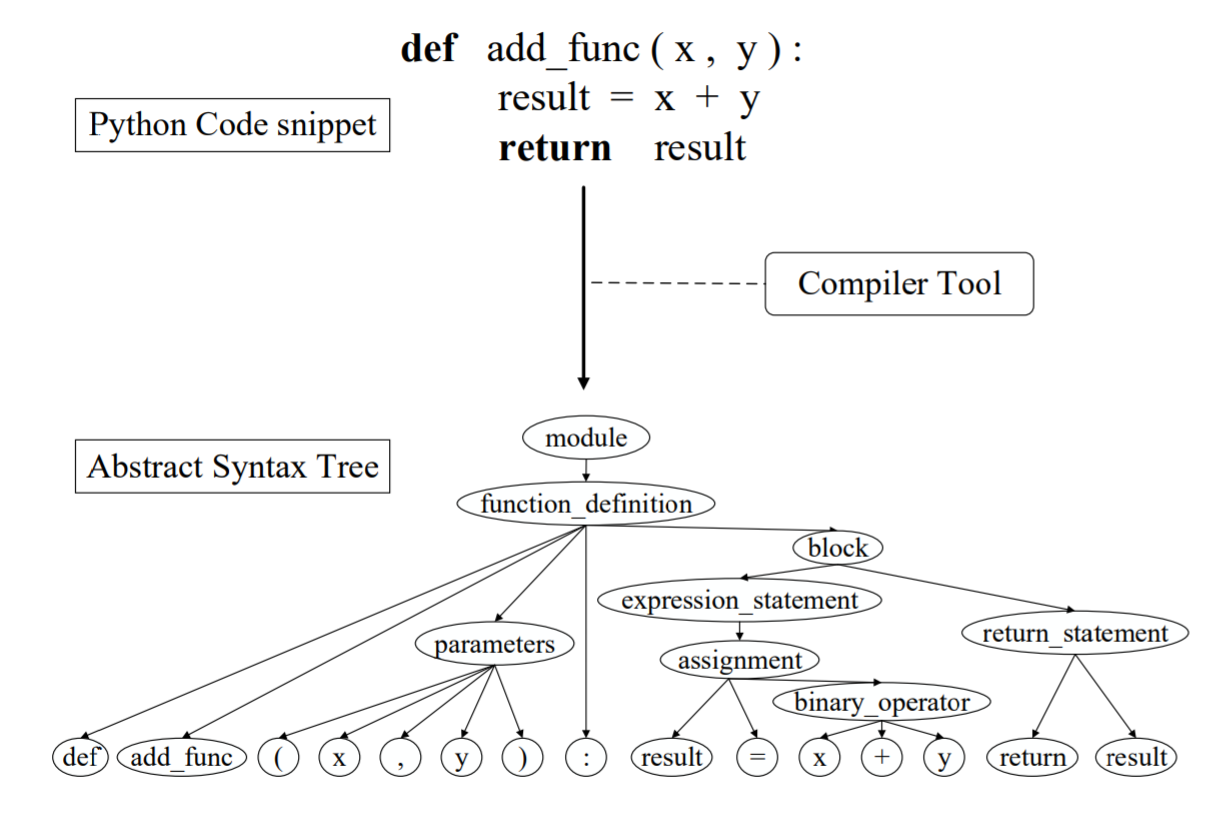

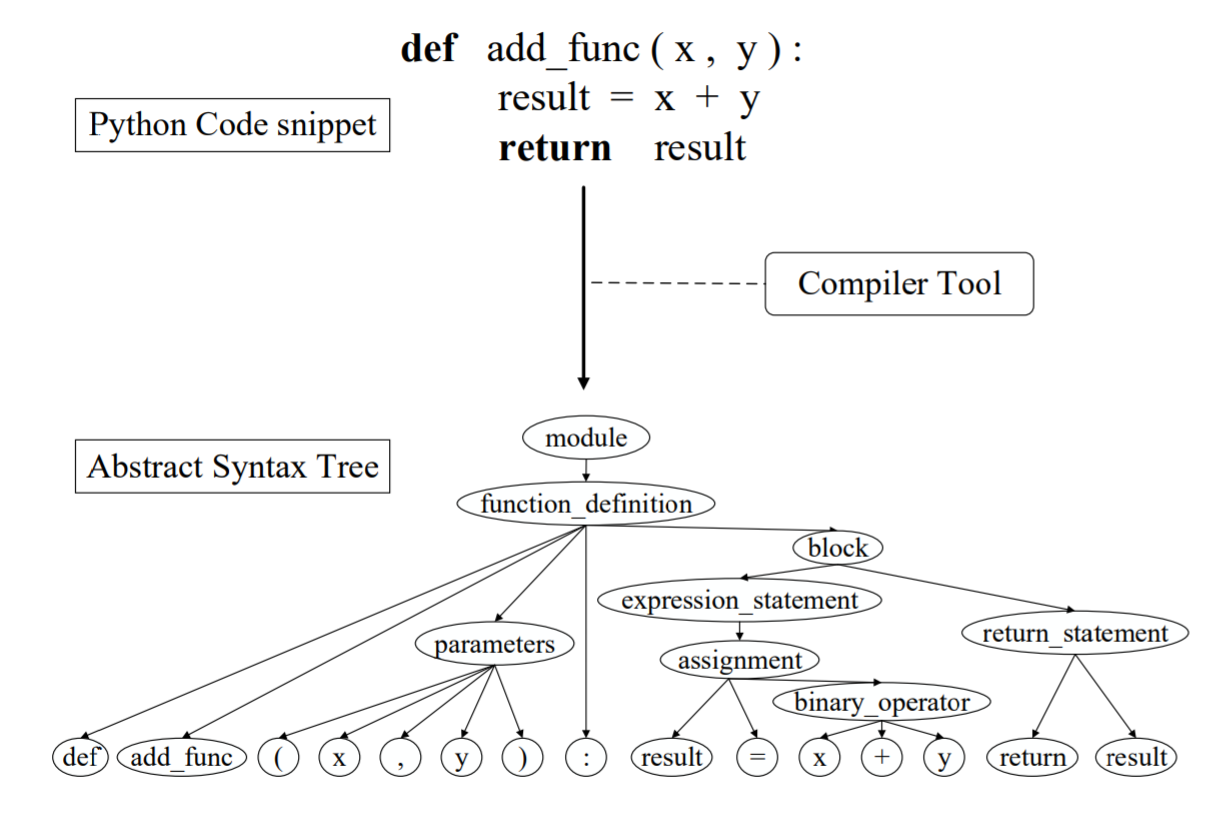

To address these problems, we propose CLSEBERT, a Constrastive Learning Framework for Syntax Enhanced Code Pre-Trained Model, to deal with various code intelligence tasks. In the pre-training stage, we consider the code syntax and hierarchy contained in the Abstract Syntax Tree (AST) and leverage the constrastive learning to learn noise-invariant code representations. Besides the masked language modeling (MLM), we also introduce two novel pre-training objectives. One is to predict the edges between nodes in the abstract syntax tree, and the other is to predict the types of code tokens. Through extensive experiments on four code intelligence tasks, we successfully show the effectiveness of our proposed model.

A 4096-element 3D-integrated Si-SiN optical phased array for high-power coherent LiDAR

Han Wang, Weimin Xie, Xin Yan, Jiaqi Li, Youxi Lu, Ping Jiang, Feng Li, Kai Jin, Xu Yang, Jiali Jiang, Keran Deng, Weishuai Chen, Jing Luo, Li Jin, Junbo Feng, Kai Wei

Opto-Electronic Technology

2026-03-20

High-speed and large-capacity visible light communication for 6G: advances and perspectives

Nan Chi, Zhilan Lu, Fujie Li, Haoyu Zhang, Yunkai Wang, Xinyi Liu, Zhiwu Chen, Zhe Feng, Zhuoran Hu, Zhixue He, Ziwei Li, Chao Shen, Junwen Zhang

Opto-Electronic Technology

2026-03-20

Holotomography-driven learning unlocks in-silico staining of single cells in flow cytometry by avoiding fluorescence co-registration

Daniele Pirone, Giusy Giugliano, Michela Schiavo, Annalaura Montella, Martina Mugnano, Vincenza Cerbone, Maddalena Raia, Giulia Scalia Ivana Kurelac, Diego Luis Medina, Lisa Miccio Mario Capasso, Achille Iolascon, Pasquale Memmolo, Pietro Ferraro

Opto-Electronic Science

2026-02-25

A hybrid integrated high-precision tunable semiconductor laser

Yiran Zhu, Botao Fu, Zhiwei Fang, Qiyue Hu, Jianping Yu, Yunpeng Song, Yu Ma, Min Wang, Kunpeng Jia, Zhenda Xie, Ya Cheng

Opto-Electronic Advances

2026-02-12