(Preprint) Neural Architecture Dilation for Adversarial Robustness

Yanxi Li ¹, Zhaohui Yang ² ³, Yunhe Wang 王云鹤 ², Chang Xu ¹

¹ School of Computer Science, University of Sydney, Australia

² Noah’s Ark Lab, Huawei Technologies, China

中国 香港 华为诺亚方舟实验室

³ Key Lab of Machine Perception (MOE), Department of Machine Intelligence, Peking University, China

中国 北京 北京大学机器感知与智能教育部重点实验室

arXiv, 2021-08-16

Abstract

With the tremendous advances in the architecture and scale of convolutional neural networks (CNNs) over the past few decades, they can easily reach or even exceed the performance of humans in certain tasks. However, a recently discovered shortcoming of CNNs is that they are vulnerable to adversarial attacks. Although the adversarial robustness of CNNs can be improved by adversarial training, there is a trade-off between standard accuracy and adversarial robustness.

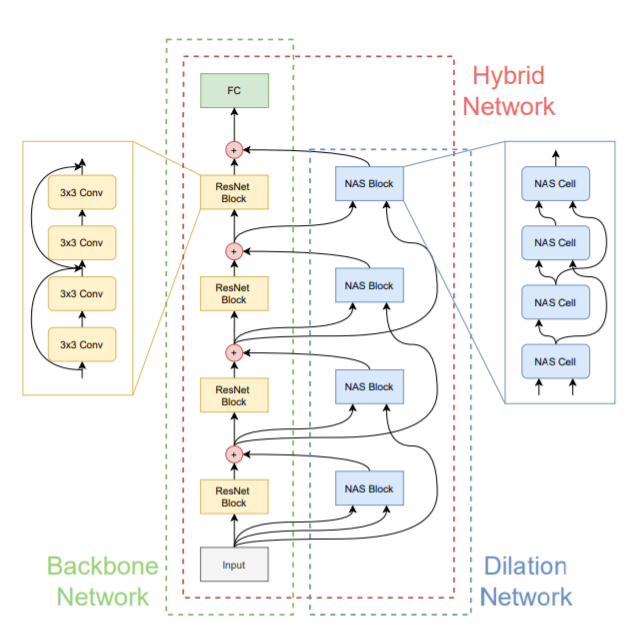

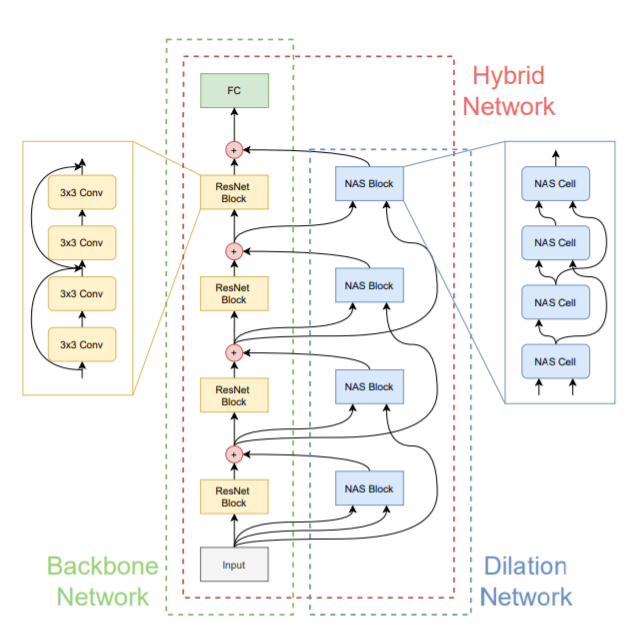

From the neural architecture perspective, this paper aims to improve the adversarial robustness of the backbone CNNs that have a satisfactory accuracy. Under a minimal computational overhead, the introduction of a dilation architecture is expected to be friendly with the standard performance of the backbone CNN while pursuing adversarial robustness. Theoretical analyses on the standard and adversarial error bounds naturally motivate the proposed neural architecture dilation algorithm. Experimental results on real-world datasets and benchmark neural networks demonstrate the effectiveness of the proposed algorithm to balance the accuracy and adversarial robustness.

A 4096-element 3D-integrated Si-SiN optical phased array for high-power coherent LiDAR

Han Wang, Weimin Xie, Xin Yan, Jiaqi Li, Youxi Lu, Ping Jiang, Feng Li, Kai Jin, Xu Yang, Jiali Jiang, Keran Deng, Weishuai Chen, Jing Luo, Li Jin, Junbo Feng, Kai Wei

Opto-Electronic Technology

2026-03-20

High-speed and large-capacity visible light communication for 6G: advances and perspectives

Nan Chi, Zhilan Lu, Fujie Li, Haoyu Zhang, Yunkai Wang, Xinyi Liu, Zhiwu Chen, Zhe Feng, Zhuoran Hu, Zhixue He, Ziwei Li, Chao Shen, Junwen Zhang

Opto-Electronic Technology

2026-03-20

Holotomography-driven learning unlocks in-silico staining of single cells in flow cytometry by avoiding fluorescence co-registration

Daniele Pirone, Giusy Giugliano, Michela Schiavo, Annalaura Montella, Martina Mugnano, Vincenza Cerbone, Maddalena Raia, Giulia Scalia Ivana Kurelac, Diego Luis Medina, Lisa Miccio Mario Capasso, Achille Iolascon, Pasquale Memmolo, Pietro Ferraro

Opto-Electronic Science

2026-02-25

A hybrid integrated high-precision tunable semiconductor laser

Yiran Zhu, Botao Fu, Zhiwei Fang, Qiyue Hu, Jianping Yu, Yunpeng Song, Yu Ma, Min Wang, Kunpeng Jia, Zhenda Xie, Ya Cheng

Opto-Electronic Advances

2026-02-12