(Preprint) Modeling Relevance Ranking under the Pre-training and Fine-tuning Paradigm

Lin Bo ¹, Liang Pang 庞亮 ³, Gang Wang ⁴, Jun Xu 徐君 ², XiuQiang He 何秀强 ⁴, Ji-Rong Wen 文继荣 ²

¹ School of Information, Renmin University of China, Beijing, China

中国 北京 中国人民大学信息学院

² Gaoling School of Artificial Intelligence, Renmin University of China, , Beijing, China

中国 北京 中国人民大学高瓴人工智能学院

³ Institute of Computing Technology, Chinese Academy of Sciences

中国 北京 中国科学院计算技术研究所

⁴ Huawei Noah’s Ark Lab

中国 香港 华为诺亚方舟实验室

arXiv, 2021-08-12

Abstract

Recently, pre-trained language models such as BERT have been applied to document ranking for information retrieval. These methods usually first pre-train a general language model on an unlabeled large corpus and then conduct ranking-specific fine-tuning on expert-labeled relevance datasets. Though reliminary successes have been observed in a variety of IR tasks, a lot of room still remains for further improvement.

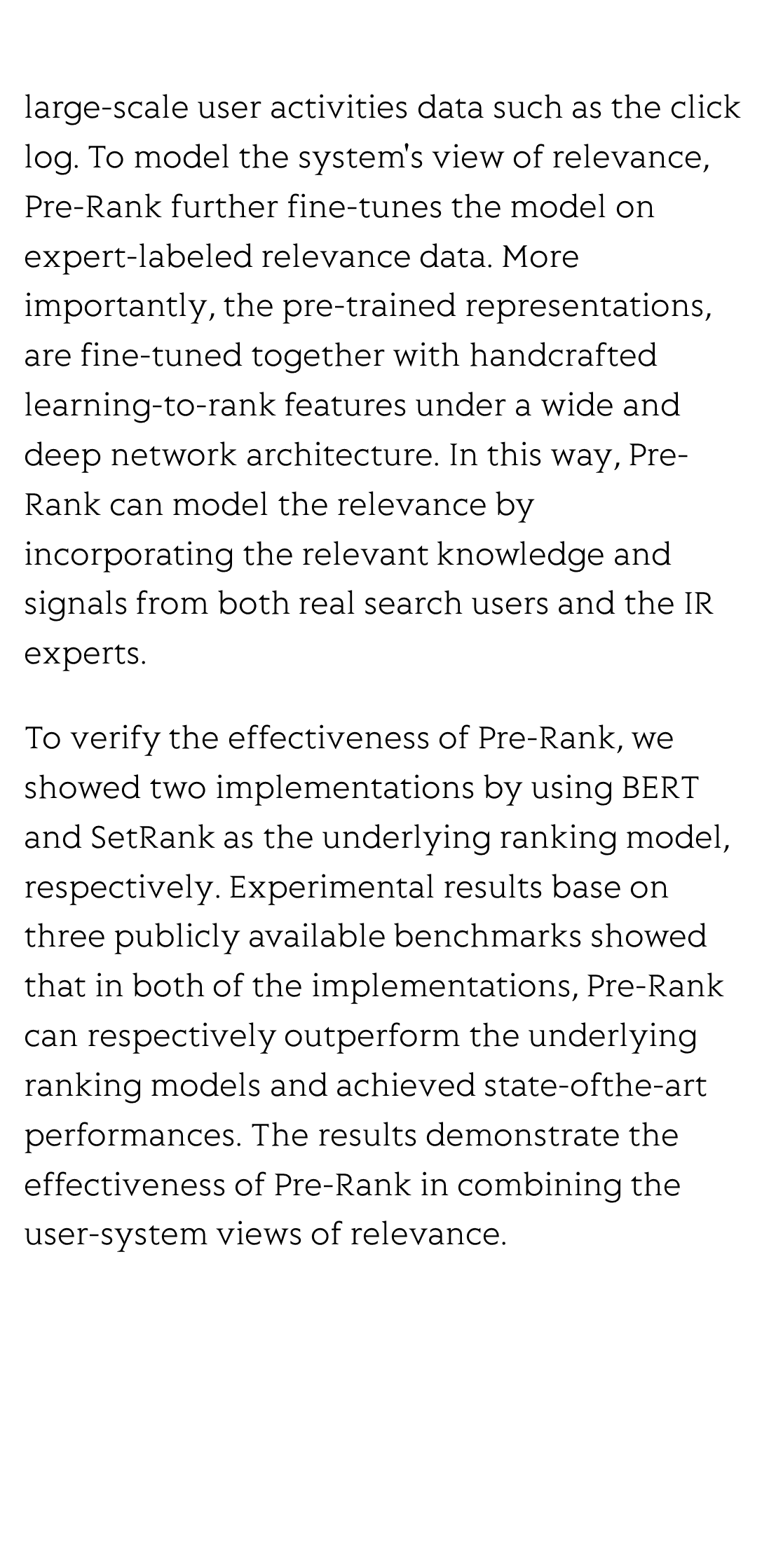

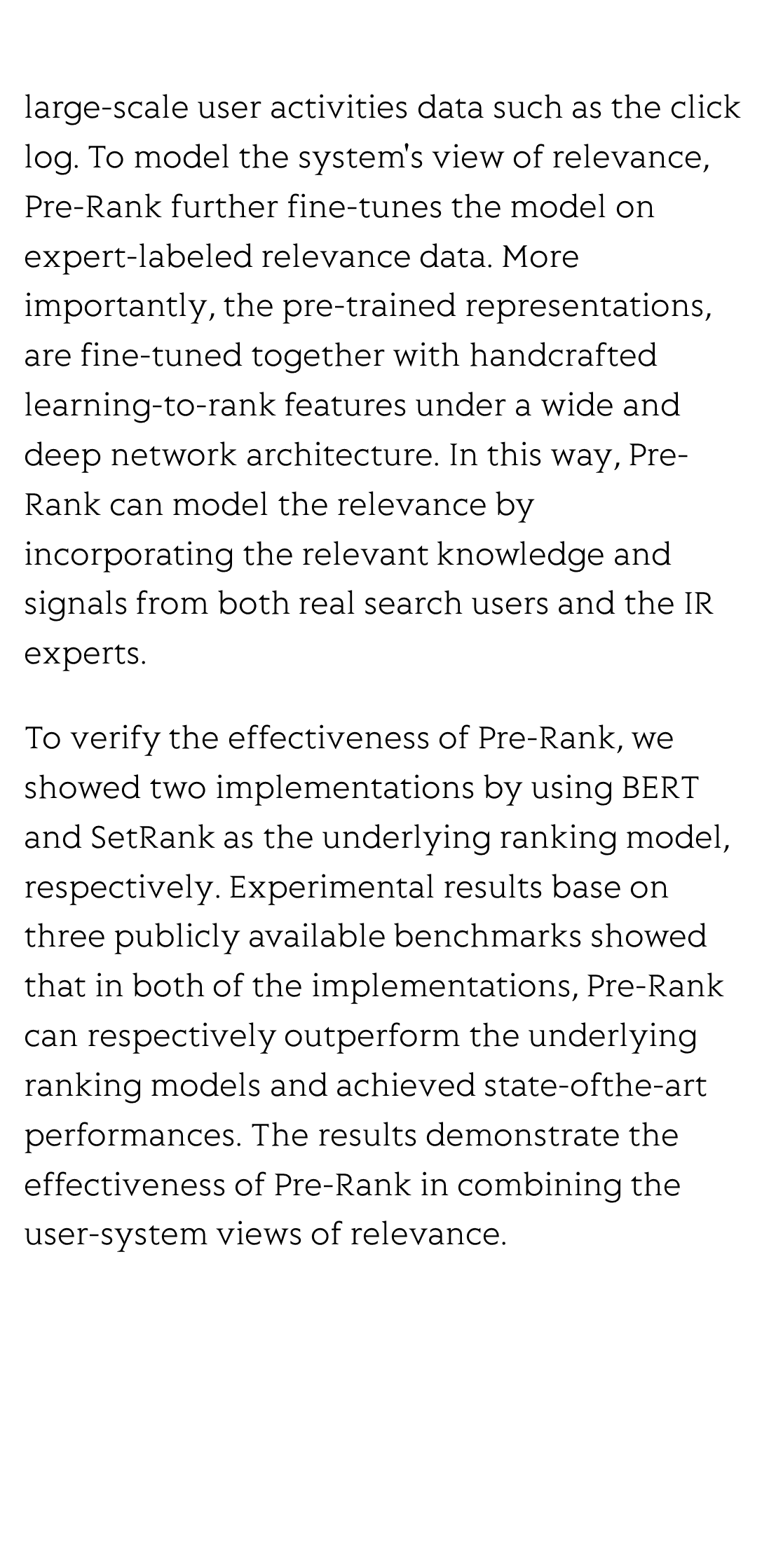

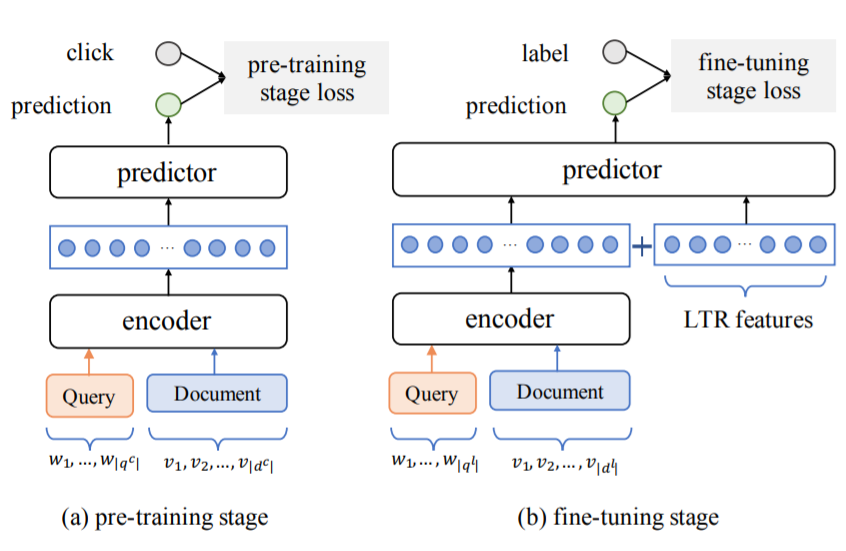

Ideally, an IR system would model relevance from a user-system dualism: the user's view and the system's view. User's view judges the relevance based on the activities of “real users” while the system's view focuses on the relevance signals from the system side, e.g., from the experts or algorithms, etc. Inspired by the user-system relevance views and the success of pre-trained language models, in this paper we propose a novel ranking framework called Pre-Rank that takes both user's view and system's view into consideration, under the pre-training and fine-tuning paradigm. Specifically, to model the user's view of relevance, Pre-Rank pre-trains the initial query-document representations based on a large-scale user activities data such as the click log. To model the system's view of relevance, Pre-Rank further fine-tunes the model on expert-labeled relevance data. More importantly, the pre-trained representations, are fine-tuned together with handcrafted learning-to-rank features under a wide and deep network architecture. In this way, Pre-Rank can model the relevance by incorporating the relevant knowledge and signals from both real search users and the IR experts.

To verify the effectiveness of Pre-Rank, we showed two implementations by using BERT and SetRank as the underlying ranking model, respectively. Experimental results base on three publicly available benchmarks showed that in both of the implementations, Pre-Rank can respectively outperform the underlying ranking models and achieved state-ofthe-art performances. The results demonstrate the effectiveness of Pre-Rank in combining the user-system views of relevance.

High-speed and large-capacity visible light communication for 6G: advances and perspectives

Nan Chi, Zhilan Lu, Fujie Li, Haoyu Zhang, Yunkai Wang, Xinyi Liu, Zhiwu Chen, Zhe Feng, Zhuoran Hu, Zhixue He, Ziwei Li, Chao Shen, Junwen Zhang

Opto-Electronic Technology

2026-03-20

Massively parallel and programmable photonic differential equation solver

Jiahao Wang, Wen Chen, Zhou Zhou, Dongyu Hu, Zile Li, Peng Chen, Yan-qing Lu, Shuang Zhang, Cheng-Wei Qiu, Shaohua Yu, Guoxing Zheng

Opto-Electronic Advances

2026-03-15

Holotomography-driven learning unlocks in-silico staining of single cells in flow cytometry by avoiding fluorescence co-registration

Daniele Pirone, Giusy Giugliano, Michela Schiavo, Annalaura Montella, Martina Mugnano, Vincenza Cerbone, Maddalena Raia, Giulia Scalia Ivana Kurelac, Diego Luis Medina, Lisa Miccio Mario Capasso, Achille Iolascon, Pasquale Memmolo, Pietro Ferraro

Opto-Electronic Science

2026-02-25

A hybrid integrated high-precision tunable semiconductor laser

Yiran Zhu, Botao Fu, Zhiwei Fang, Qiyue Hu, Jianping Yu, Yunpeng Song, Yu Ma, Min Wang, Kunpeng Jia, Zhenda Xie, Ya Cheng

Opto-Electronic Advances

2026-02-12